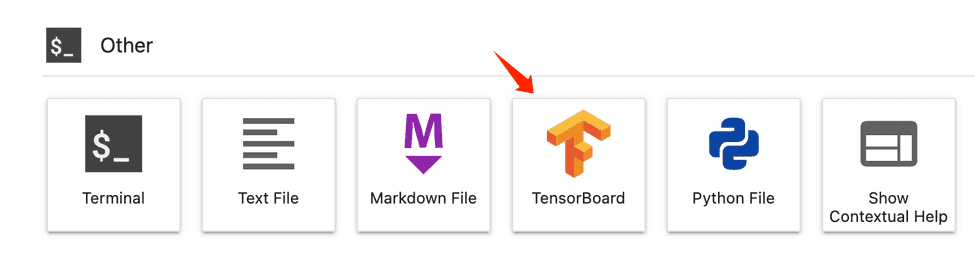

Log_dir: "output/tblogs" # relative path of Tensorboard logs (same as in your training script) Values are "all", or compute node index (for ex. If `nodes` are not selected, by default, interactive applications are only enabled on the head node. Nodes: all # For distributed jobs, use the `nodes` property to pick which node you want to enable interactive services on. # you can add a command like "sleep 1h" to reserve the compute resource is reserved after the script finishes running.Įnvironment: azureml:AzureML-tensorflow-2.4-ubuntu18.04-p圓7-cuda11-gpu:41 If you want to use custom environment, follow the examples in this tutorial to create a custom environment. Make sure to replace your compute name with your own value. For more details on how to train with the Python SDKv2, check out this article.Ĭreate a job yaml job.yaml with below sample content. You can also use the sleep infinity command that would keep the job alive indefinitely. You can put sleep at the end of your command to specify the amount of time you want to reserve the compute resource. The services section specifies the training applications you want to interact with. Returned_job = ml_or_update(command_job) Log_dir="output/tblogs" # relative path of Tensorboard logs (same as in your training script) Nodes="all" # For distributed jobs, use the `nodes` property to pick which node you want to enable interactive services on. command_job = command(.Ĭode="./src", # local path where the code is storedĬommand="python main.py", # you can add a command like "sleep 1h" to reserve the compute resource is reserved after the script finishes JupyterLabJobService( Note that you have to import the JobService class from the azure.ai.ml.entities package to configure interactive services via the SDKv2. If you want to use your own custom environment, follow the examples in this tutorial to create a custom environment. Select the training applications you want to use to interact with the job.ĭefine the interactive services you want to use for your job.If you use sleep infinity, you will need to manually cancel the job to let go of the compute resource (and stop billing). You can also use VS Code to attach to the running process and debug as you would locally. Once you have access to the job container, you can test or debug your job in the exact same environment where it would run. You can use the ssh-keygen -f "" command to generate a public and private key pair.īy specifying interactive applications at job creation, you can connect directly to the container on the compute node where your job is running. Custom distributed training setup (configuring multi-node training without using the above distribution frameworks) is not currently supported. Interactive applications can't be enabled on distributed training runs where the distribution type is anything other than Pytorch, Tensorflow or MPI.Make sure your job environment has the openssh-server and ipykernel ~=6.0 packages installed (all Azure Machine Learning curated training environments have these packages installed by default).To use VS Code, follow this guide to set up the Azure Machine Learning extension.Review getting started with training on Azure Machine Learning.Interactive training is supported on Azure Machine Learning Compute Clusters and Azure Arc-enabled Kubernetes Cluster.

Jobs can be interacted with via different training applications including JupyterLab, TensorBoard, VS Code or by connecting to the job container directly via SSH. Once the job container is accessed, users can iterate on training scripts, monitor training progress or debug the job remotely like they typically do on their local machines.

With the Azure Machine Learning interactive job experience, data scientists can use the Azure Machine Learning Python SDKv2, Azure Machine Learning CLIv2 or the Azure Studio to access the container where their job is running.

Machine learning model training is usually an iterative process and requires significant experimentation.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed